A Sentiment Information Collector-Extractor Architecture Based Neural Network for Sentiment Analysis

TL;DR: A new ensemble strategy is applied to combine the results of different sub-extractors, making the SIE more universal and outperform any single sub- Extractor and outperforms the state-of-the-art methods on three datasets of different language.

About: This article is published in Information Sciences.The article was published on 2018-10-01 and is currently open access. It has received 21 citations till now. The article focuses on the topics: Sentiment analysis & Deep learning.

Summary (3 min read)

Jump to: [1. Introduction] – [2. Related Work] – [3. Model] – [3.1. Sentiment Information Collector (SIC)] – [4.1. Datasets] – [4.2. Pre-training and Word Embedding] – [4.3. Experiment Settings] – [4.4. Results and Discussions] – [4.4.1. Size of information-extracting windows] – [4.4.2. Depth of sub-extractors] – [4.4.3. Model ensemble strategy] and [4.5. Comparison of Methods]

1. Introduction

- Deep learning has made a great progress recently and plays an important role in academia and industry.

- This key word appears in two completely different positions.

- Besides, sentence (iii) contains two key words not and pleasant and they are separated by another word been.

- How to locate the key words remains a big challenge in sentiment analysis.30 Researchers have designed many efficient models in order to capture the sentiment information.

- Thus, it could reduce the effectiveness40 when RNN is used to capture the semantics of a whole sentence, because key components could appear anywhere in a sentence rather than at the end.

3. Model

- Figure 1 shows the architecture of the whole model.

- As is illustrated in Figure 1, the model can be divided into two part: (i) SIC and (ii) SIE.

- Then the matrix X is fed into information extractor and latent semantic information will be extracted based on model ensemble strategy.

3.1. Sentiment Information Collector (SIC)

- The authors first describe the architecture of the SIC in their model.

- The left-side context cl(vi) of word vi is calculated using Equation(1), where e(vi) is the word embedding of word vi, which is a dense vector with |e| real value elements.

- The information extractor, which is an ensemble model, is designed to extract sentiment information precisely from sentence information matrix X. The SIE consists of three subextractors.

- In their case, the authors choose ReLU [31] as the nonlinear function.

- When all of the latent semantic vectors mji are calculated separately, each sub-extractor will apply a max-pooling operation: mj = L max i=1 mji (6) The max function is an element-wise function.

4.1. Datasets

- The Amazon5 dataset and the Amazon3 dataset contains 45, 000 training samples and 5, 000 testing samples in each class, and the samples are randomly selected from the origin data source.

- The authors have crawled microblogs from Sina microblog website (http://weibo.com/) which has grown to be a major social media platform with hundreds of millions of users in China.

- The authors cut off some records whose emotional tendencies are not obvious and there are 3, 000, 000 samples left.

- The authors regard these three datasets as a benchmark to evaluate different models and explore the influence of parameters in the following experiments.

4.2. Pre-training and Word Embedding

- There is no blank in a Chinese sentence which is different from English, so preprocessing work must be done at first to separate each sentence into several words which is called word segment and in their work the authors use an open source tool called JieBa[33] to conduct it.

- After the word segment, the whole sentence is transformed into a sequence of Chinese words.

- Initializing word vectors with those obtained from an unsupervised neural language model is a popular method to improve performance in the absence of a large supervised training set [34, 15, 35].

- The authors use the publicly available word2vec tools that were trained on reviews from Amazon and SinaMicroblog for English and Chinese respectively.

4.3. Experiment Settings

- The models are trained by min-batch back propagation with optimizer RMSprop [36] which is usually a good choice for LSTM.

- The batch size chosen in the experiment is 128 and225 gradients are averaged over each batch.

- Parameters of the model are randomly initialized over a uniform distribution with [-0.5, 0.5].

- The authors set the number of kernels of convolution layers all as 200 with different window sizes and also set the number of hidden units in BLSTM as 200.

- For regularization the authors use dropout [37] with probability 0.5 on the last Softmax layer within all models.

4.4. Results and Discussions

- N their SICENN model, the structure of SIC is a fixed structure based on the BSLTM model.

- The structure of SIE is more flexible.

- Three critical factors that influence the effectiveness of SIE are explored in their following experiments.

- The model ensemble strategy used to combine sub-extractors.

4.4.1. Size of information-extracting windows

- In order to extract sentiment information from the sentence information matrix more240 precisely, the sizes of information-extracting windows need to be carefully chosen.

- Resents views from amazon contains 3 categories and SinaMicroblog contain 2 categories.

- RCNN refers to the model that Siwei proposed in [6].

- Word embedding e(vi) is a pre-trained vector containing the semantic information of words, while sentence vectors cl(vi) and cr(vi) are the outputs of BLSTM containing the contextual information.

- The experiments results show that265 the same window size have different performance in different datasets, which indicates the necessity to use ensemble strategy and combine the advantages of different window sizes.

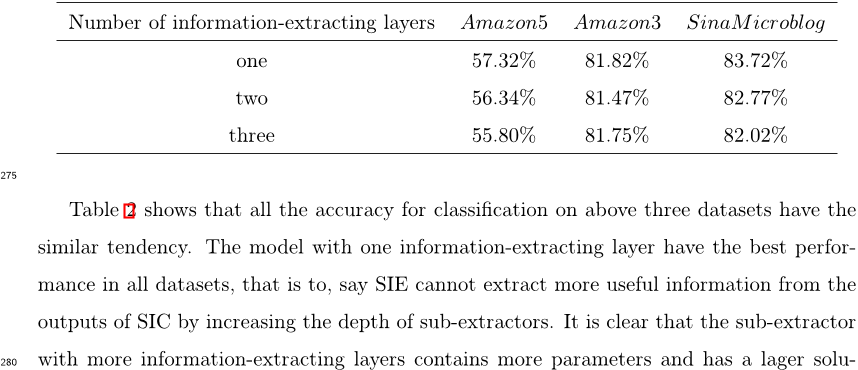

4.4.2. Depth of sub-extractors

- The depth of the sub-extractors is determined by the number of information-extracting layers, which can influence the accuracy for classification.

- The authors have performed a series of experiments to explore how the depth of the sub-extractors influences the accuracy in the SIE.

- Tion space than that with fewer layers, but more layers will also bring much difficulty to optimizer with backward propagation strategy.

- The experiments results show that one layer just stands at a balance point.

- The model ensemble strategy is essential285 for improve the performance of the information extractor and improve the accuracy for classification.

4.4.3. Model ensemble strategy

- Model ensemble strategy can directly impact the effectiveness of the SIE and influence the results of sentiment classification.

- Because the parameters in neural network are updated by iteration and search for the local optimal, so the initialization of these trainable parameters can influence the accuracy of sentiment classification.

- By comparing Table 1 and Table 3, the authors can discovery that the SIE with model ensemble strategy outperforms the all the sub-extractor.

- Besides, the SICENN model can reach a better accuracy if the authors initial the weights properly based on the results of Table 1 on different datasets.

- The authors initial weights variables in Amazon5 as 0 ,1, 0because the extractor whose size of information extraction windows is 2 has the best performance among all the310 single window size, as is shown in Table 1.

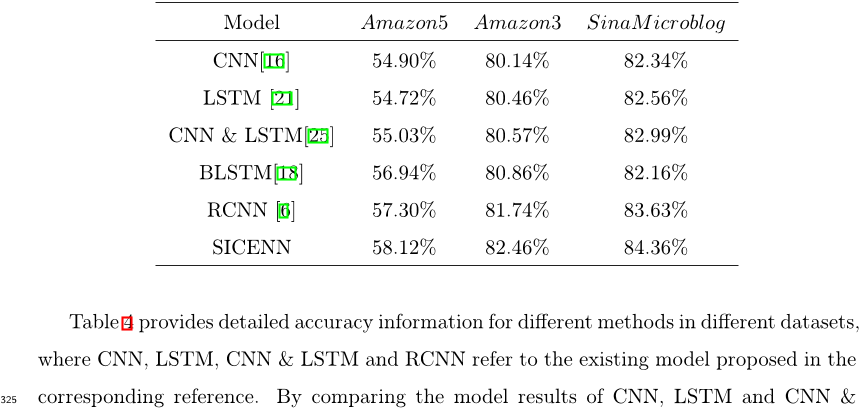

4.5. Comparison of Methods

- The authors compare their method with widely-used artificial neural network for sentiment anal-320 ysis including Siweis [6] model, which model has been compared with other state-of-the-art model.

- The improvements in Amazon3 and SinaMicroblog are 0.72% and 0.73% respectively comparing their SICENN model with RCNN model.

- The experiment results on various datasets also demonstrate their model outperforms previous state-of-the-art approaches.

- The authors may build more sophisticated ensemble models and may involve more structures, such as attention model, to355 extract the sentiment information in the sentence more precisely.

Did you find this useful? Give us your feedback

Citations

More filters

Journal Article•

28,685 citations

TL;DR: This paper presents a novel model for experts to carry out Group Decision Making processes using free text and alternatives pairwise comparisons and introduces two ways of applying consensus measures over the Group decision Making process.

Abstract: Social networks are the most preferred mean for the people to communicate. Therefore, it is quite usual that experts use them to carry out Group Decision Making processes. One disadvantage that recent Group Decision Making methods have is that they do not allow the experts to use free text to express themselves. On the contrary, they force them to follow a specific user–computer communication structure. This is against social network nature where experts are free to express themselves using their preferred text structure. This paper presents a novel model for experts to carry out Group Decision Making processes using free text and alternatives pairwise comparisons. The main advantage of this method is that it is designed to work using social networks. Sentiment analysis procedures are used to analyze free texts and extract the preferences that the experts provide about the alternatives. Also, our method introduces two ways of applying consensus measures over the Group Decision Making process. They can be used to determine if the experts agree among them or if there are different postures. This way, it is possible to promote the debate in those cases where consensus is low.

89 citations

TL;DR: This work evaluates existing efforts proposed to do language specific sentiment analysis with a simple yet effective baseline approach and suggests that simply translating the input text in a specific language to English and then using one of the existing best methods developed for English can be better than the existing language-specific approach evaluated.

72 citations

Cites methods from "A Sentiment Information Collector-E..."

...[36] uses a Bidirectional LSTM to build a Sentiment Information Collector and a Sentiment Information Extractor (SIE)....

[...]

TL;DR: In this paper, an end-to-end multi-prototype fusion embedding that fuses context-specific and task-specific information was proposed to solve the problem of polysemous-unaware word embedding.

33 citations

References

More filters

Proceedings Article•

12 Feb 2016TL;DR: A sentence-level neural model is proposed to address the limitation of pooling functions, which do not explicitly model tweet-level semantics and gives significantly higher accuracies compared to the current best method for targeted sentiment analysis.

Abstract: Targeted sentiment analysis classifies the sentiment polarity towards each target entity mention in given text documents. Seminal methods extract manual discrete features from automatic syntactic parse trees in order to capture semantic information of the enclosing sentence with respect to a target entity mention. Recently, it has been shown that competitive accuracies can be achieved without using syntactic parsers, which can be highly inaccurate on noisy text such as tweets. This is achieved by applying distributed word representations and rich neural pooling functions over a simple and intuitive segmentation of tweets according to target entity mentions. In this paper, we extend this idea by proposing a sentence-level neural model to address the limitation of pooling functions, which do not explicitly model tweet-level semantics. First, a bi-directional gated neural network is used to connect the words in a tweet so that pooling functions can be applied over the hidden layer instead of words for better representing the target and its contexts. Second, a three-way gated neural network structure is used to model the interaction between the target mention and its surrounding contexts. Experiments show that our proposed model gives significantly higher accuracies compared to the current best method for targeted sentiment analysis.

259 citations

Proceedings Article•

12 Feb 2017TL;DR: An attention-based bidirectional LSTM approach to improve the target-dependent sentiment classification by learning the alignment between the target entities and the most distinguishing features.

Abstract: We present an attention-based bidirectional LSTM approach to improve the target-dependent sentiment classification. Our method learns the alignment between the target entities and the most distinguishing features. We conduct extensive experiments on a real-life dataset. The experimental results show that our model achieves state-of-the-art results.

151 citations

TL;DR: A framework for multi-class sentiment classification based on the improved OVO strategy and the SVM algorithm and the results show that the performance of the proposed method is significantly better than that of the existing methods.

149 citations

TL;DR: Experimental results demonstrate that the new model outperforms the state-of-the-art approaches on seven benchmark datasets for text classification and the learning capability of the proposed method is greatly improved and the classification accuracy is even enhanced significantly by over 2% on some datasets.

120 citations

01 Oct 2017

TL;DR: A novel approach to sentiment analysis through the use of combined kernel from multiple branches of convolutional neural network (CNN) with Long Short-term Memory (LSTM) layers produces a model with the highest reported accuracy on the Internet Movie Database review sentiment dataset.

Abstract: Deep learning neural networks have made significant progress in the area of image and video analysis. This success of neural networks can be directed towards improvements in textual sentiment classification. In this paper, we describe a novel approach to sentiment analysis through the use of combined kernel from multiple branches of convolutional neural network (CNN) with Long Short-term Memory (LSTM) layers. Our combination of CNN and LSTM schemes produces a model with the highest reported accuracy on the Internet Movie Database (IMDb) review sentiment dataset. Additionally, we present multiple architecture variations of our proposed model to illustrate our attempts to increase accuracy while minimizing overfitting. We experiment with numerous regularization techniques, network structures, and kernel sizes to create five high-performing models for comparison. These models are capable of predicting the sentiment polarity of reviews from the IMDb dataset with accuracy above 89%. Firstly, the accuracy of our best performing proposed model surpasses the previously published models and secondly it vastly improves upon the baseline CNN+LSTM model. The capability of the combined kernel from multiple branches of CNN based LSTM architecture could also be lucrative towards other datasets for sentiment analysis or simply text classification. Furthermore, the proposed model has the potential in machine learning in video and audio.

116 citations