All figures (24)

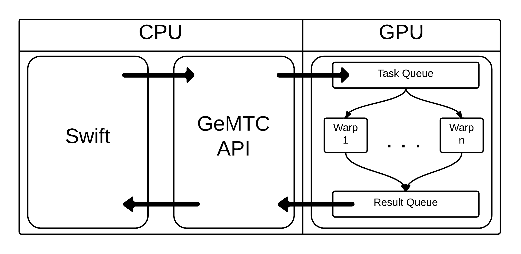

Figure 10: Swift/T stack including GeMTC.

Figure 9: GeMTC implicitly bundles tasks to efficiently utilize PCI bandwidth and latency.

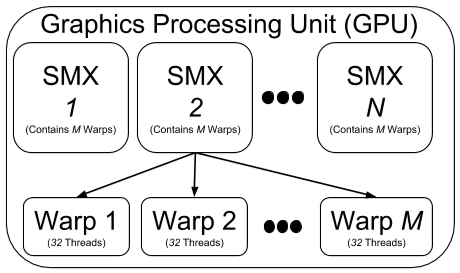

Figure 1: Diagram of GPU architecture hierarchy.

Figure 11: Swift script launching GeMTC. ![Figure 12: Diagram demonstrating execution model for molecular dynamics with replica exchange. Short simulation segments are run in an ensemble with asynchronous data exchanges [24].](/figures/figure-12-diagram-demonstrating-execution-model-for-3heg48tw.png)

Figure 12: Diagram demonstrating execution model for molecular dynamics with replica exchange. Short simulation segments are run in an ensemble with asynchronous data exchanges [24].

Figure 23: Microbenchmark measuring efficiency for tasks with varied granularities on a variety of hardware: a 1344-core NVIDIA GPU, a 60-core Intel Xeon Phi, and a 48-core AMD Opteron SMP.

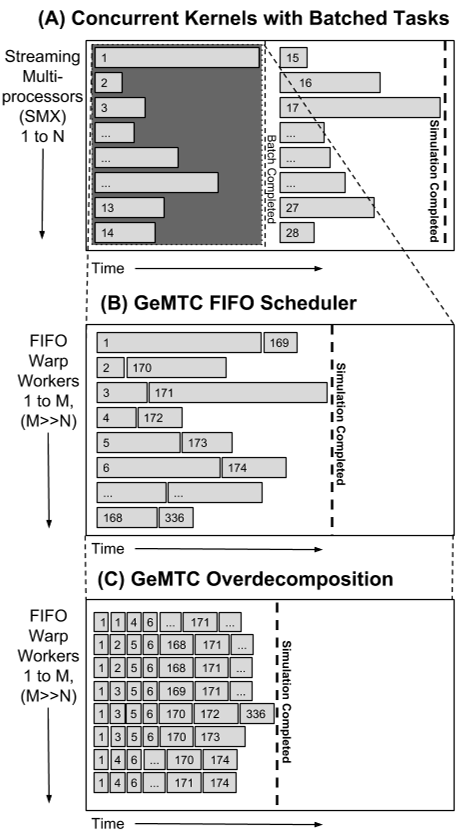

Figure 2: GeMTC FIFO scheduler processes tasks as soon as they are available, rather than blocking on batches for completion. The warps required to execute cases (B) and (C) are provided by all the streaming multiprocessor’s within the shaded area of (A). While the hardware available remains the same, the number of parallel channels is increased for the amount of concurrent parallel work.

Figure 16: GeMTC and MDLite scaling over 1344 workers on Blue Waters.

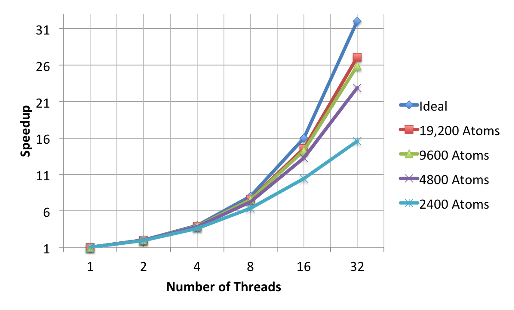

Figure 14: Speedup achieved with varied concurrency (1-32 threads) within a single warp, launching MDLite tasks from 2,500 atoms to 19,200 atoms.

Figure 13: GeMTC scales MD within a single warp and achieves decreased walltime as the level of concurrency within the computation is increased.

Figure 15: GeMTC utilization on the K20X running MD codes with varied worker counts from 1 to 168.

Table 1: GeMTC API

Figure 4: GeMTC Mat-Mul AppKernel

Figure 3: Flow of a task in GeMTC.

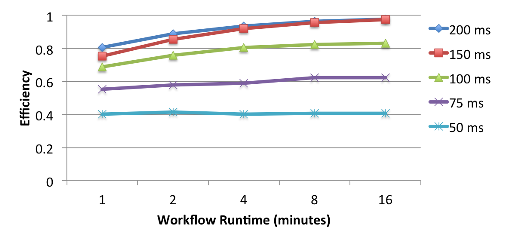

Figure 21: GeMTC + Swift efficiency for varying task granularities up to 512 nodes on Blue Waters with a single GeMTC worker active per node.

Figure 22: Efficiency for workloads with varied task granularities up to 86K independent warps of Blue Waters. 168 active workers/GPU.

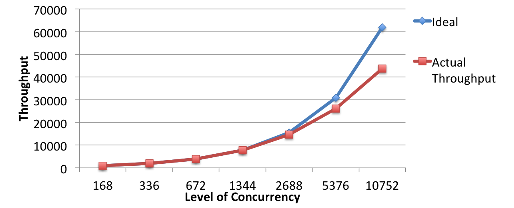

Figure 19: GeMTC + Swift Throughput over 10,000 GPU workers.

Figure 17: Fine-grained Swift CPU workloads on Blue Waters, demonstrating the ability to drive finegrained workloads with high efficiency.

Figure 20: Single-node efficiency on Blue Waters for parallel work with varied task granularities running 168 GPU workers.

Figure 18: Swift driving GeMTC tasks on a Cray XK7(K20X equipped) node of Blue Waters.

Figure 5: Code sample of GeMTC API.

Figure 8: Result of gemtcMalloc on free memory.

Figure 6: GPU Workers interacting with queues.

Figure 7: Memory mapping of free memory available to the device.

23 Jun 2014-