Did you find this useful? Give us your feedback

131 citations

...As an added measure of fault-tolerance, the proposed algorithm also takes the reliability of the processors into account....

[...]

117 citations

...In addition to backfilling scheduling policy, rate monotonic scheduling algorithm [49, 50] and its variations [51, 52] are proposed to schedule periodical tasks with different priorities in embedded systems....

[...]

103 citations

...Literature examples include Krishna and Shin (1986) and Bertossi et al. (1999) on periodic tasks and (Ghosh et al....

[...]

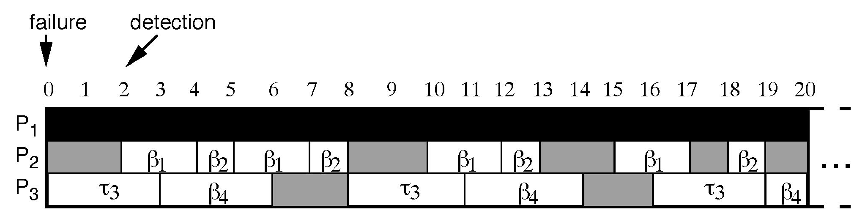

...Literature examples include Krishna and Shin (1986) and Bertossi et al. (1999) on periodic tasks and (Ghosh et al. 1994) on aperiodic tasks. For example, Figure 19 shows an example of scheduling four primary tasks (P1, P2, P3, P4) and their backups (B1, B2, B3, B4) on three processors, as indicated in Ghosh et al. (1994). When the original tasks complete execution, their backups are deallocated and the space can be used for scheduling other tasks ©S....

[...]

...Literature examples include Krishna and Shin (1986) and Bertossi et al. (1999) on periodic tasks and (Ghosh et al. 1994) on aperiodic tasks....

[...]

96 citations

93 citations

...The well-known Rate-Monotonic First-Fit assignment algorithm was extended in [2]....

[...]

5,397 citations

1,401 citations

...The proposed algorithm determines which tasks must use the active duplication and which can use the passive duplication....

[...]

1,203 citations

...A periodic task i is completely identified by a pair Ci; Ti , where Ci is i's execution time and Ti is i's request period....

[...]

...Passive copy overbooking and active copy deallocation allow many passive copies to be scheduled sharing the same time intervals on the same processor, thus reducing the total number of processors needed....

[...]

1,108 citations

616 citations

...Dhall and Liu [3] generalized the RM algorithm to...

[...]

...Liu and Layland [10] introduced the Rate-Monotonic (RM) algorithm for preemptively scheduling periodic tasks on a single processor, under the assumption that task deadlines are equal to their periods....

[...]

...Liu and Layland [10] proposed a fixed-priority scheduling algorithm, called Rate-Monotonic (RM), for solving the (nonfault-tolerant) problem stated in Section 2.1 on a single processor system, that is when m 1....

[...]

...RM was generalized to multiprocessor systems by Dhall and Liu [3], who proposed, among others, the Rate-Monotonic First-Fit (RMFF) heuristic....

[...]

...Liu and Layland proved the following two important results concerning fixed-priority scheduling algorithms....

[...]

However, further research is needed, e. g., to derive an analytical worst case bound on the number of processors used by the proposed FTRMFF algorithm, or to devise schedulability conditions which are weaker but simpler than the Completion Time Test, e. g., as those proposed in [ 1 ]. This optimization is left for further work. As a subject for future research, the combined duplication scheme proposed in the present paper could be used to extend the Rate-Monotonic First-Fit algorithm in order to tolerate failures also in the presence of resource reclaiming and task synchronization. Finally, further research could deal with assignment strategies which are different from those considered in this paper.