Did you find this useful? Give us your feedback

115 citations

...The first two Mel cepstral coefficients were modified (excluding the log-energy coefficient) in order to maximise intelligibility of speech in noise as given by an approximated version of the glimpse proportion measure (Cooke, 2006; Valentini-Botinhao et al., 2012a)....

[...]

...To create the ‘TTSGP’ type a Mel cepstral coefficient modification method (Valentini-Botinhao et al., 2012b) was applied to the spectral parameters generated by the TTS type....

[...]

...…audio power reallocation based on the Speech Intelligibility Index (Sauert and Vary, 2010, 2011) or glimpse proportion (Tang and Cooke, 2012), cepstral extraction based on the glimpse proportion measure (Valentini-Botinhao et al., 2012a), and the insertion of small pauses (Tang and Cooke, 2011)....

[...]

73 citations

...To enhance the spectral envelope a noise-dependent optimisation based on the glimpse proportion measure was performed [29]....

[...]

28 citations

...We then proposed a method to extract cepstral coefficients which maximized the GP measure (Valentini-Botinhao et al., 2012a)....

[...]

...Our solution to this was to modify the generated speech instead (Valentini-Botinhao et al., 2012b), by modifying the Mel cepstral coefficients....

[...]

26 citations

1,741 citations

...To train, adapt and generate speech we extracted: 59 Mel cepstral coefficients with α = 0.77, Mel scale F0, and 25 aperiodicity energy bands extracted using STRAIGHT [8]....

[...]

...77, Mel scale F0, and 25 aperiodicity energy bands extracted using STRAIGHT [8]....

[...]

693 citations

469 citations

374 citations

...A further extension proposed in this paper is the possibility of using this method for Mel cepstral coefficients, which can provide higher speech quality with fewer coefficients [6]....

[...]

...We can represent the spectrum by M -th order Mel cepstral coefficients {cm}m=0 in the following manner [6]:...

[...]

...where α is a warping factor which can be chosen to represent, for instance, the Mel scale [6]....

[...]

255 citations

In future, the authors plan to investigate reallocating energy across time. The authors also plan operating under a loudness constraint rather than an energy one.

The authors used as stopping criteria both error convergence and a maximum distortion threshold set to be 10% of relative increase in the Euclidian distance between the STEP representation of original and modified speech.

To train, adapt and generate speech the authors extracted: 59 Mel cepstral coefficients with α = 0.77, Mel scale F0, and 25 aperiodicity energy bands extracted using STRAIGHT [8].

For the listening test the authors used 32 native English speakers listening to the noisy samples over headphones in soundproof booths and typing in what he or she heard.

The Glimpse Proportion (GP) measure for speech intelligibility in noise [3] is the proportion of spectral-temporal regions called glimpses where speech is more energetic than noise.

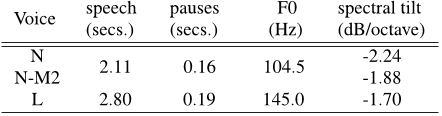

If such data are not available, then the authors can apply noise-independent modifications at the feature level based on known acoustic properties of Lombard speech, such as F0 increase, flattening of spectral tilt and duration stretch [1].

The intelligibility gains obtained by the full Lombard voice L over the N-L voice reflect the impact of changes in duration patterns, F0 and the aperiodicity parameters that define the excitation signal, as pointed out in Table 2.

The authors decided to use an average voice model rather than building a speaker-dependent voice because the normal speech dataset was not phonetically balanced.

Moreover the authors observed that, for the competing talker, the intelligibility gain obtained by the Lombard voice over the modified voice was mainly due to changes in duration, F0 and excitation parameters.