Did you find this useful? Give us your feedback

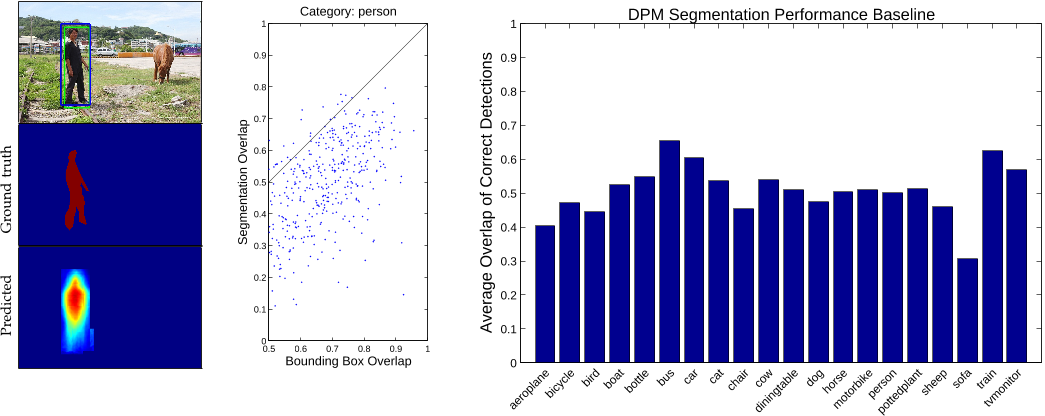

![TABLE 1: Top: Detection performance evaluated on PASCAL VOC 2012. DPMv5-P is the performance reported by Girshick et al. in VOC release 5. DPMv5-C uses the same implementation, but is trained with MS COCO. Bottom: Performance evaluated on MS COCO for DPM models trained with PASCAL VOC 2012 (DPMv5-P) and MS COCO (DPMv5-C). For DPMv5-C we used 5000 positive and 10000 negative training examples. While MS COCO is considerably more challenging than PASCAL, use of more training data coupled with more sophisticated approaches [5], [6], [7] should improve performance substantially.](/figures/table-1-top-detection-performance-evaluated-on-pascal-voc-1sypmu73.png)

123,388 citations

44,703 citations

40,257 citations

30,811 citations

26,458 citations

...Region proposal methods typically rely on inexpensive features and economical inference schemes....

[...]

3,043 citations

2,960 citations

...We accomplished this using a surprisingly simple yet effective technique that queries for pairs of objects in conjunction with images retrieved via scene-based queries [17,3]....

[...]

...These include the ImageNet [1], PASCAL VOC 2012 [2], and SUN [3] datasets....

[...]

...Finally, our dataset could provide a good benchmark for other types of labels, including scene types [3], attributes [9,8] and full sentence written descriptions [51]....

[...]

...The dataset is also significantly larger in number of instances per category than the PASCAL VOC [2] and SUN [3] datasets....

[...]

...A novel dataset that combines many of the properties of both object detection and semantic scene labeling datasets is the SUN dataset [3] for scene understanding....

[...]

2,924 citations

2,699 citations

...The early evolution of object recognition datasets [22], [23], [24] facilitated the direct comparison...

[...]

...Caltech 101 [22] and Caltech 256 [23] marked the transition to more realistic object images retrieved from the internet while also increasing the number of object categories to 101 and 256, respectively....

[...]

2,597 citations

...The early evolution of object recognition datasets [22], [23], [24] facilitated the direct comparison...

[...]

...Caltech 101 [22] and Caltech 256 [23] marked the transition to more realistic object images retrieved from the internet while also increasing the number of object categories to 101 and 256, respectively....

[...]

Since the detection of many objects such as sunglasses, cellphones or chairs is highly dependent on contextual information, it is important that detection datasets contain objects in their natural environments.

by observing how recall increased as the authors added annotators, the authors estimate that in practice over 99% of all object categories not later rejected as false positives are detected given 8 annotators.

Segmenting 2,500,000 object instances is an extremely time consuming task requiring over 22 worker hours per 1,000 segmentations.

The task of labeling semantic objects in a scene requires that each pixel of an image be labeled as belonging to a category, such as sky, chair, floor, street, etc.

Utilizing over 70,000 worker hours, a vast collection of object instances was gathered, annotated and organized to drive the advancement of object detection and segmentation algorithms.

Segmentations of insufficient quality were discarded and the corresponding instances added back to the pool of unsegmented objects.

After 10-15 instances of a category were segmented in an image, the remaining instances were marked as “crowds” using a single (possibly multipart) segment.

For the detection of basic object categories, a multiyear effort from 2005 to 2012 was devoted to the creation and maintenance of a series of benchmark datasets that were widely adopted.

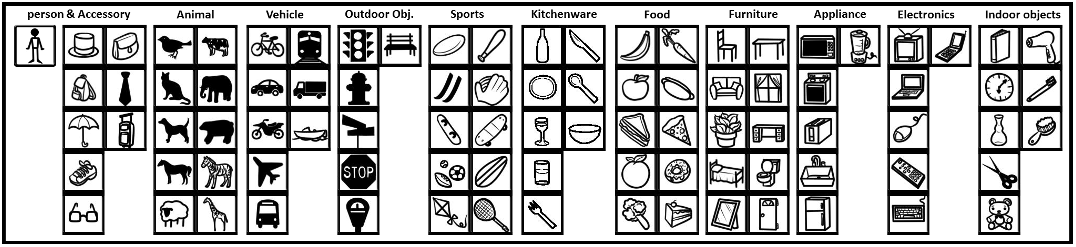

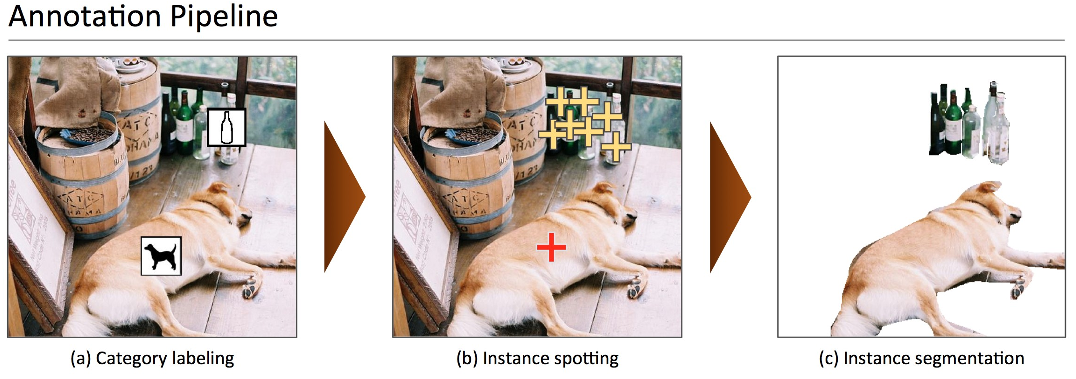

If a worker determines instances from the super-category (animal) are present, for each subordinate category (dog, cat, etc.) present, the worker must drag the category’s icon onto the image over one instance of the category.

Since the authors are primarily interested in precise localization of object instances, the authors decided to only include “thing” categories and not “stuff.”

For images containing 10 object instances or fewer of a given category, every instance was individually segmented (note that in some images up to 15 instances were segmented).

“Thing” categories include objects for which individual instances may be easily labeled (person, chair, car) where “stuff” categories include materials and objects with no clear boundaries (sky, street, grass).

Such examples may act as noise and pollute the learned model if the model is not rich enough to capture such appearance variability.

Another interesting observation is only 10% of the images in MS COCO have only one category per image, in comparison, over 60% of images contain a single object category in ImageNet and PASCAL VOC.