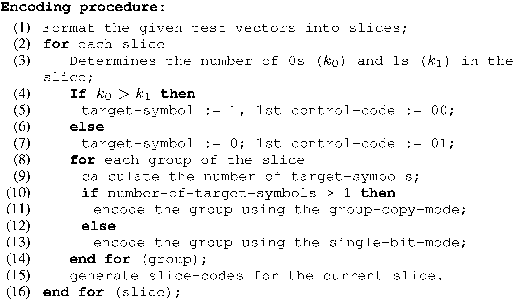

IEEE TRANSACTIONS ON VERY LARGE SCALE INTEGRATION (VLSI) SYSTEMS, VOL. 16, NO. 11, NOVEMBER 2008 1429

Test Data Compression Using Selective

Encoding of Scan Slices

Zhanglei Wang, Member, IEEE, and Krishnendu Chakrabarty, Fellow, IEEE

Abstract—We present a selective encoding method that reduces

test data volume and test application time for scan testing of In-

tellectual Property (IP) cores. This method encodes the slices of

test data that are fed to the scan chains in every clock cycle. To

drive

scan chains, we use only tester channels, where

=

log

2

( +1) +2

. In the best case, we can achieve compression

by a factor of

using only one tester clock cycle per slice. We

derive a sufficient condition on the distribution of care bits that

allows us to achieve the best-case compression. We also derive a

probabilistic lower bound on the compression for a given care-bit

density. Unlike popular compression methods such as Embedded

Deterministic Test (EDT), the proposed approach is suitable for IP

cores because it does not require structural information for fault

simulation, dynamic compaction, or interleaved test generation.

The on-chip decoder is small, independent of the circuit under test

and the test set, and it can be shared between different circuits. We

present compression results for a number of industrial circuits and

compare our results to other recent compression methods targeted

at IP cores.

Index Terms—ATE pattern repeat, IP cores, scan slice, test data

compression.

I. INTRODUCTION

T

EST DATA volume is now recognized as a major contrib-

utor to the cost of manufacturing testing of integrated cir-

cuits (ICs) [1]–[4]. Recent growth in design complexity and the

integration of embedded cores in system-on-chip (SoC) ICs has

led to a tremendous growth in test data volume; industry experts

predict that this trend will continue over the next few years [5].

For example, the 2005 ITRS document predicted that the test

data volume for integrated circuits will be as much as 30 times

larger in 2010 than in 2005 [6].

High test data volume leads to an increase in testing time.

In addition, high test data volume may also exceed the lim-

ited memory depth of automatic test equipment (ATE). Mul-

tiple ATE reloads are time consuming because data transfers

from a workstation to the ATE hard disk, or from the ATE hard

disk to ATE channels are relatively slow; the upload time ranges

from tens of minutes to hours [7]. Test application time for scan

Manuscript received January 13, 2007; revised September 3, 2007. First pub-

lished August 12, 2008; current version published October 22, 2008. This work

was supported in part by the National Science Foundation under Grant CCR-

0204077. A preliminary version of this paper was published in the Proceeding

of the IEEE International Test Conference, pp. 581590, 2005. This research was

carried out when Zhanglei Wang was a Ph.D. student at Duke University.

Z. Wang was with the Department of Electrical and Computer Engineering,

Duke University, Durham, NC 27708 USA. He is now with Cisco Systems Inc.,

San Jose, CA 95134 USA (e-mail: zhawang@cisco.com).

K. Chakrabarty is with the Department of Electrical and Computer Engi-

neering, Duke University, Durham, NC 27708 USA (e-mail: krish@duke.edu).

Digital Object Identifier 10.1109/TVLSI.2008.2000674

testing can be reduced by using a large number of internal scan

chains. However, the number of ATE channels that can directly

drive scan chains is limited due to pin count constraints.

Logic built-in self-test (LBIST) [8] has been proposed as a

solution for alleviating these problems. LBIST reduces depen-

dencies on expensive ATEs and allows precomputed test sets to

be embedded in test sequences generated by BIST hardware to

target random pattern resistant faults. However, the memory re-

quired to store the top-up patterns for LBIST can exceed 30% of

the memory used in a conventional automatic test pattern gener-

ation (ATPG) approach [8]. With increasing circuit complexity,

the storage of an extensive set of ATPG patterns on-chip be-

comes prohibitive [1]. Moreover, BIST can be applied directly

to SoC designs only if the embedded cores are BIST-ready; con-

siderable redesign may be necessary for incorporating BIST in

cores that are not BIST-ready.

Test data compression offers a promising solution to the

problem of increasing test data volume. A test set

for the

circuit under test (CUT) is compressed to a much smaller data

set

, which is stored in ATE memory. An on-chip decoder

is used to generate

from during test application. A

popular class of compression schemes relies on the use of a

linear decompressor. These techniques are based on LFSR

reseeding [9]–[12] and combinational linear expansion net-

works consisting of

XOR gates [13]–[15], and they have been

implemented in commercial tools such as TestKompress from

Mentor Graphics [1], SmartBIST from IBM/Cadence [3],

and DBIST from Synopsys [16]. These compression schemes

exploit the fact that scan test vectors typically contain a large

fraction of unspecified bits even after compaction. However,

the on-chip decoders for these techniques are specific to the

test set, which necessitates decompressor redesign if the test set

changes during design iterations. Finally, in order to achieve

the best compression, these methods resort to fault simulation

and test generation. As a result, they are less suitable for test

reuse in SoC designs based on Intellectual Property (IP) cores.

Another category of compression methods uses statistical

coding, variants of run-length coding, dictionary-based coding,

and hybrid techniques [17]–[22]. These methods exploit the

regularity inherent in test data to achieve high compression.

However, most of these schemes target single scan chains and

they require synchronization between the ATE and CUT.

We present a selective encoding method that reduces test data

volume and test application time for the scan testing of IP cores.

This method encodes the slices of test data that are fed to the

scan chains in every clock cycle. Unlike many prior methods,

the proposed method does not encode all the specified (0 s and

1 s) and unspecified (don’t care) bits in a slice. For example, if

a slice contains more 1’s than 0’s, only the 0’s are encoded and

all don’t cares are mapped to 1. We use only

tester channels,

1063-8210/$25.00 © 2008 IEEE

Authorized licensed use limited to: DUKE UNIVERSITY. Downloaded on October 30, 2008 at 08:57 from IEEE Xplore. Restrictions apply.

1430 IEEE TRANSACTIONS ON VERY LARGE SCALE INTEGRATION (VLSI) SYSTEMS, VOL. 16, NO. 11, NOVEMBER 2008

where , to drive scan chains. The log-

arithmic reduction in the number of tester channels allows us to

use a large number of internal scan chains, thereby reducing test

application time significantly. In the best case, we can achieve

compression by a factor of

using only one tester clock cycle

per slice. We derive a sufficient condition on the distribution of

care bits that allows us to achieve the best-case compression.

We also present a probabilistic lower bound on the compression

for a given care bit density.

The proposed technique does not require dedicated test pins

for each core in an SoC. If cores are tested sequentially, only

one common test interface is needed. If some cores are tested

in parallel, then they can together be viewed as a larger core

with more scan chains. For example, if five cores are tested in

parallel, and each core has 255 scan chains, it is equivalent to

one core with 255

5 scan chains. The proposed technique will

therefore only require 13 pins. Meanwhile, the on-chip decoder

must be modified to handle the scan-in and capture for the five

different cores.

The pattern decompression is of the continuous-flow type be-

cause no complex handshakes are required between the tester

and the chip, and there is no need to introduce tester stall cy-

cles. Unlike popular compression methods, such as Embedded

Deterministic Test (EDT) [1], the proposed approach is suitable

for IP cores because it does not require structural information for

fault simulation, dynamic compaction, or interleaved test gen-

eration. The on-chip decoder is small, independent of the circuit

under test and the test set. We present compression results for a

number of industrial circuits, and compare our results to other

recent compression methods targeted at IP cores.

The steady increase in clock frequencies over recent years has

led to designs with a small number of gates between latches, or

between latches and input/output (I/O) pins [23]. As a result,

logic circuits today have very short combinational logic depth,

and many logic cones with very little overlap. This is in contrast

to older circuits such as the ISCAS’85 benchmarks that tend

to have a smaller number of overlapping logic cones. A conse-

quence of the shallow logic depth is that test patterns in present

day circuits contain many don’t care bits; e.g., it has been re-

ported recently that test sets for industrial circuits contain only

1%–5% care bits [24]. After a desired stuck-at coverage is ob-

tained (using methods such as dynamic compaction and fault

grading to reduce pattern count), a commercial test pattern gen-

erator typically uses random fill to increase the likelihood of

surreptitious detection of unmodeled faults. However, if the test

sets for the cores are delivered with the don’t care bits to the

system integrator, an appropriate compression method can be

used at the system level to reduce test data volume and testing

time. This imposes no additional burden on the core vendor. Un-

modeled faults can still be detected if the compression method

does not arbitrarily map all don’t cares to either 1’s or 0’s.

We do not address the problem of output compaction in this

paper. The proposed input compression method can be used

with recent output compaction methods such as X-compact [2],

convolutional compaction [25], and i-compact [26] to further re-

duce test data volume.

The rest of this paper is organized as follows. The details of

the proposed approach are described in Section II. Section III

presents the decompression architecture and Section IV presents

compression results for industrial circuits.

Fig. 1. Test application using the proposed approach.

Fig. 2. Slice-code consists of a 2-bit control-code and a

K

-bit data code.

II. P

ROPOSED APPROACH

As shown in Fig. 1, the proposed approach encodes the slices

of test data (scan slices) that are fed to the internal scan chains.

The on-chip decoder contains an

-bit buffer, and it manipu-

lates the contents of the buffer according to the compressed data

that it receives. After all the compressed data for a single slice

is received, the data in the buffer is delivered to the scan chains.

Each slice is encoded as a series of

-bit slice-codes, where

, , and is the number of internal

scan chains in the CUT. The number of slice codes needed to

encode a given slice depends on the distribution of 1’s, 0’s, and

don’t cares in the slice. As shown in Fig. 2, the first two bits

of a slice-code form the control-code that determines how the

following

bits, referred to as the data-code, are interpreted.

As described in Section I, the proposed approach only en-

codes a subset of the specified bits in a slice. First, the encoding

procedure examines the slice and determines the number of 0-

and 1-valued bits. If there are more 1’s (0’s) than 0’s (1’s), then

all X’s in this slice are mapped to 1 (0), and only 0’s (1’s) are

encoded. The 0’s (1’s) are referred to as target-symbols and are

encoded into data-codes in two modes: 1) single-bit-mode and

2) group-copy-mode.

In the single-bit-mode, each bit in a slice is indexed from 0

to

. A target-symbol is represented by a data-code that

takes the value of its index. For example, to encode the slice

XXX10000, the X’s are mapped to 0 and the only target symbol

of 1 at bit position 3 is encoded as 0011. In this mode, each target

symbol in a slice is encoded as a single slice code. Obviously,

if there are many target symbols that are adjacent or near to

each other, it is inefficient to encode each of them using separate

slice codes. Hence, the group-copy mode has been designed to

increase compression efficiency.

In the group-copy mode, an

-bit slice is divided into

groups, and each group (with the possible exception

of the last group) is

-bits wide, as shown in Fig. 3. The

groups are numbered from 0 to , with the first group

(referred to as group 0) containing bits 0 to

and so on.

If a given group contains more than one target symbol, then the

group-copy mode is used and the entire group is copied to a

data code. Two data codes are needed to encode a group. The

first data code specifies the index of the first bit of the group,

and the second data code contains the actual data. In the group-

Authorized licensed use limited to: DUKE UNIVERSITY. Downloaded on October 30, 2008 at 08:57 from IEEE Xplore. Restrictions apply.

WANG AND CHAKRABARTY: TEST DATA COMPRESSION USING SELECTIVE ENCODING OF SCAN SLICES 1431

TABLE I

E

XAMPLE TO

ILLUSTRATE

SLICE ENCODING

Fig. 3.

N

-bit scan slice is divided into

M

=

d

N=K

e

groups in the group-copy

mode.

copy mode, don’t cares can be randomly filled instead of being

mapped to 0 or 1 by the compression scheme.

For example, let

and , i.e.,

each slice is 31-bits wide and consists of six 5-bit

groups and one 1-bit group. To encode the slice

X1110_00000_XX00X_XXXX0_00000_00XXX_0

(the slice is shown separated by underscores into 5-bit groups),

only the three 1’s in group 0 are encoded. Since group 0 has

more than one target symbol, the group-copy mode is used,

yielding two data codes 00000 and X1110. The first data code

refers to bit 0 (first bit of group 0), and the second data code

carries the content of group 0.

The concept of a group is relevant only for the group-copy-

mode. If only the single-bit mode is used, we do not need to con-

sider groups at all. The underlying idea for the proposed method

is: 1) only encode target symbols; 2) the bit-index of the target

symbol is encoded in the slice code for proper decompression;

3) to further improve encoding efficiency, the group-copy mode

is introduced, in which the scan slice is divided into groups.

Since data codes are used in both modes, control codes are

needed to avoid ambiguity. Control codes 00, 01, and 10 indi-

cate that the current slice code is a single-bit mode slice code,

and control code 11 indicates the current slice code is a group-

copy mode slice code. The first slice code for any scan slice is

always a single-bit mode code, and its control code can only be

00 or 01. Hence, control codes 00 and 01 are referred to as

ini-

tial-control codes and they indicate the start of a new slice. Con-

trol code 00 (01) indicates that all X’s in the current scan slice

should be mapped to 0 (1), and the target symbol for the whole

slice is 1 (0). Except the first slice code, all other single-bit mode

slice codes for the same scan slice can only have the control

code 10, i.e., simply setting the bit specified by the associated

data code to the target symbol of this slice.

Table I shows a complete example to further illustrate how

scan slices are encoded into slice codes. In this example,

and . In Case 1, the slice contains only one

target symbol (1), and is encoded into one slice-code using the

single-bit mode. In Case 2, the slice contains no target symbols.

To handle this special case, we introduce a dummy data code

whose value is

. Since the bits in the scan slice is indexed

from 0 to

, a data code with value implies that no bit

should be set to the target symbol.

In Case 3 and Case 4, the same slice is encoded with the

group-copy mode turned off and on, respectively. In Case 3,

since the slice contains six target symbols, six single-bit mode

slice codes are required to encode it, resulting in negative com-

pression, which is undesirable. In Case 4, however, only four

slice codes are needed: three group-copy mode slice codes to

encode groups 0 and 1, and one single-bit mode slice code to

encode the 1 at bit 30.

As discussed before, two group-copy mode slice codes are

needed to encode a given group, one to indicate the starting bit

of the group, and the other to carry the content of the group.

However, to further improve compression efficiency, if

adjacent groups are to be encoded using the group-copy mode,

they can be merged together. This optimization procedure is

referred to as group merging. As shown in Case 4, since groups

0 and 1 are adjacent, instead of using four slice codes 1100000,

11X1100, 1100101, and 1101101 to encode the two groups,

only three slice codes are needed. The code that indicates

the starting bit of group 1 (1100101) is omitted. Therefore,

to encode

adjacent groups, group-copy mode

slice codes are needed, with the first slice code indicating

the starting bit of the first group, and the other

group-copy

mode slice codes carrying the contents of the

groups. With

group merging, the number of consecutive group-copy mode

slice codes is no longer fixed. To avoid ambiguity, two series

of consecutive group-copy mode slice codes that encode two

series of adjacent groups must be interleaved by one single-bit

mode slice code.

Cases 3 and 4 also show that the group-copy mode should be

used whenever possible. Since two group-copy mode slice codes

are sufficient to encode any

-bit group, if a group contains

more than one target symbol, it should be encoded using the

group-copy mode.

The encoding procedure is summarized in Fig. 4. In Step 1),

each test vector is divided into a series of slices. We then encode

each slice as a series of slice codes. In Steps 3)–7), the numbers

of 0’s and 1’s are calculated, and the target symbol as well as

Authorized licensed use limited to: DUKE UNIVERSITY. Downloaded on October 30, 2008 at 08:57 from IEEE Xplore. Restrictions apply.

1432 IEEE TRANSACTIONS ON VERY LARGE SCALE INTEGRATION (VLSI) SYSTEMS, VOL. 16, NO. 11, NOVEMBER 2008

Fig. 4. Encoding procedure.

the control code of the first slice code are set. The first slice code

of each slice must contain an initial-control code (00 or 01).

Steps 8)–14) encode all the groups of a slice. For each group

of a slice, if it contains more than one target symbol, it is

encoded using the group-copy mode; otherwise, it is encoded

using the single-bit mode.

Once all groups have been encoded, the slice code gener-

ation step (Step 15) becomes straightforward. It first merges

group-copy mode slice codes that correspond to adjacent

groups, and then appropriately interleaves group-copy mode

slice codes with single-bit mode slice codes. It also ensures that

the first slice code is a single-bit mode code.

As can be seen from Fig. 4, the encoding procedure consists

of two nested loops: the outer loop is for scan slices, and the

inner loop is for groups of a given slice. Hence, its time com-

plexity is

, where is the total data volume

of the test set in bits.

A. Upper and Lower Bounds on Compression

By deriving the upper and lower bounds on compression,

this section provides more insights into the proposed compres-

sion method. The maximum compression is achieved when each

slice is encoded as a single slice code. Hence, the upper bound

on compression is simply

, where is the number of scan

chains and

is the number of ATE channels.

The upper bound can only be achieved if every slice is en-

coded as a single slice code. This, in turn, is only possible if

every scan slice contains either zero or one target symbol. How-

ever, the maximum compression factor of

can be achieved

even if the test set contains a large fraction of care bits. Test set

relaxation methods as in [27] can be used to help approach the

upper bound. Such methods, however, require structural infor-

mation about the circuit.

To derive the lower bound, we do not consider the group-

copy mode. Note that the outcome of the group-copy mode de-

pends on the actual distribution of the target symbols in scan

slices. It is not possible to derive a bound that considers the

group-copy mode, but is independent of the distribution of the

target symbols. Since the target symbol distribution is difficult

to model and it usually changes from slice-to-slice, such an

analysis is rather difficult. Moreover, the optimization step that

merges consecutive group-copy mode slice codes further makes

the analysis even more difficult.

The lower bound is reached when each target symbol is en-

coded into a single-bit mode slice code, i.e., each group contains

at most one target symbol such that the group-copy mode is not

used for the entire test set. The lower bound can be expressed in

terms of care-bit density. Let the fraction of 1’s, 0’s, and don’t

cares in any scan slice be

, , and , respectively. The prob-

ability

that any bit in the scan slice is a target symbol is given

by

. The number of target symbols in any scan

slice can therefore be viewed as a random variable

that fol-

lows a binomial distribution, i.e.,

The expected number of target symbols is simply .

Each target symbol in a scan slice must be mapped to one slice

code, hence, the average number of slice codes for a given scan

slice is

. If we ignore the additional compression that can be

obtained using the group-copy mode, and let

be the number of

scan slices, we get the following expression for the compression

factor

:

(1)

Note that even if we do not assume any knowledge of

and

, a lower bound can be obtained from the knowledge of

the care-bit density, i.e.,

. If the care-bit density

for each scan slice is different, i.e., the care-bit densities are

for the scan slices, respectively, we get

(2)

Equation (2) provides a more accurate bound because the first

few pattern in a (ordered) test set contain many more care bits

than the latter patterns. However, computing this lower bound

requires nearly as much effort as compressing the test set.

Fig. 5 shows the probabilistic lower bound on the compres-

sion factor

for different values of and . The lower bound

decreases with increasing

, since increases with . In prac-

tice, due to the use of the group-copy mode, we often achieve a

larger compression factor. Thus, while the upper bound is overly

optimistic for larger values of

, the lower bound is overly pes-

simistic for larger values of

.

B. ATE Pattern Repeat

A property of the proposed compression method is that con-

secutive

-bit compressed slices fed by the ATE are often iden-

tical or compatible. Therefore, ATE pattern repeat can be used to

further reduce test data volume after selective encoding of scan

slices. In the uncompressed data sets, especially among the test

vectors that lie near the end of a test set, there are a large number

of consecutive slices that contain no target symbols. These slices

are encoded as identical single slice codes that have only dummy

data codes. With ATE pattern repeat, these slice codes can be

further compacted. Additionally, consecutive group-copy mode

slice codes can also be compacted if they are compatible. Fig. 6

shows how a set of scan slices are encoded. The example shows

Authorized licensed use limited to: DUKE UNIVERSITY. Downloaded on October 30, 2008 at 08:57 from IEEE Xplore. Restrictions apply.

WANG AND CHAKRABARTY: TEST DATA COMPRESSION USING SELECTIVE ENCODING OF SCAN SLICES 1433

Fig. 5. Lower bounds on the compression factor for different values of

N

and

p

.

Fig. 6. Encoding example.

that some slice codes, e.g., the first two in the encoded test set,

can be combined and applied using ATE pattern repeat.

III. D

ECOMPRESSION

ARCHITECTURE

Fig. 7 shows the state transition diagram of the decoder. The

decoder enters designated states and performs different opera-

tions as specified by the control codes that it receives. Initially,

the decoder is in the init state; when it receives an initial-con-

trol code, it enters the single-bit mode and performs a series of

operations referred to as P1. Table II explains the five groups of

operations (P1–P5) in Fig. 7.

Fig. 8 shows the block diagram of the decoder. The finite-state

machine (FSM) generates control signals for the other com-

ponents. The

-bit address register is used in the group-copy

mode to store the index of the first bit of the target group. This

register can be incremented by

to address a series of adjacent

groups. The

-to- address decoder generates selection sig-

nals to address a single bit of the buffer. The

-bit buffer con-

tains combinational logic that provides the following function-

alities: 1) each bit in the buffer can be individually addressed and

2) all bits in the same group can be addressed in parallel. These

two functions are used in the single-bit mode and the group-copy

mode, respectively.

Fig. 7. State transition diagram of the decoder.

The 2-bit input signal is the control-code from the

tester. The signal

, when asserted to 0, resets the FSM to

its initial state. The signal

is set to high when the decoding

process for a slice is finished and the content of the buffer is

shifted to the internal scan chains.

If the signal

is asserted, the decoder works in the

group-copy mode. The

is used to increment the ad-

dress register by

. In the group-copy mode, the -to- ad-

dress decoder receives input from the address register; in the

single-bit-mode, it receives input from the data-code input. The

-bit selection signal is used to address a single bit in the

buffer. At any given time only one of the

wires is asserted.

The second bit of the control code

, i.e., the target

symbol, is latched to

. In the single-bit mode, the specified bit

is set to

. If the control code is 00 or 01, the signal

is asserted, and all other bits except the specified bit are set to

the complement of

. The signal is asserted whenever

the buffer contents are to be changed. The signal

is set to 0

only during the first clock cycle of the group-copy mode; at the

same time the address of the first bit of the group is loaded to

the address register.

The

signal from the address decoder can only address a

single bit of the buffer. However, in the group-copy mode, all

the

bits in the target group should be addressed (the last group

Authorized licensed use limited to: DUKE UNIVERSITY. Downloaded on October 30, 2008 at 08:57 from IEEE Xplore. Restrictions apply.

![TABLE IX COMPARISON WITH THE TEST DATA MUTATION METHOD [29]](/figures/table-ix-comparison-with-the-test-data-mutation-method-29-3tu728xs.png)