SpeechDat II VoiceClass

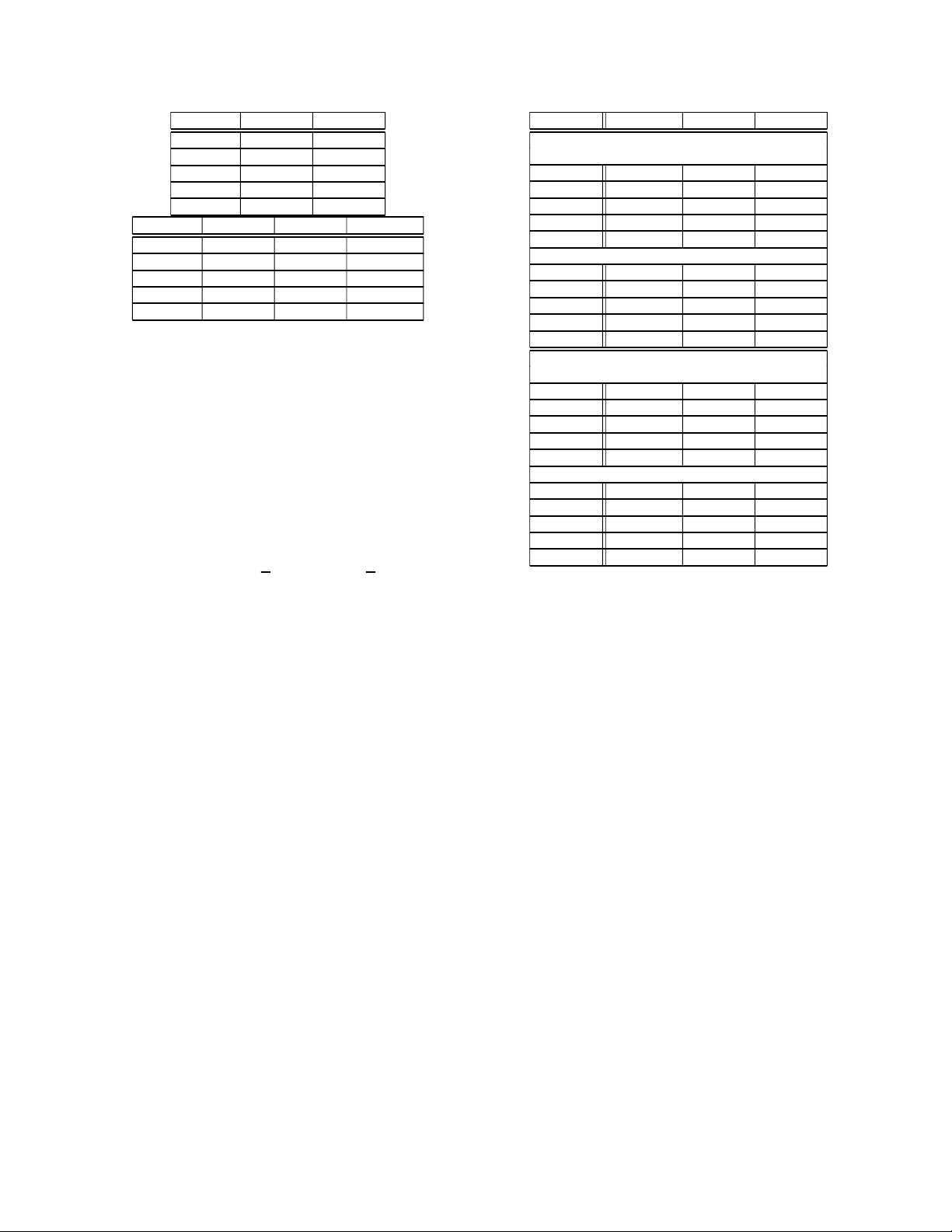

System precision recall precision recall

GMM ([4]) 42% 46% 64% 65%

PPR 54% 55% 60% 58%

GMM-UBM 49% 41% 65% 63%

SVM 77% 74% 61% 60%

HUMAN 55% 69% – –

Table 3. Overall precision and recall for the best two systems of

[4] (GMM and parallel phone recognizer [PPR]) and of our two sys-

tems; t ested on the two different corpora; the last row shows the

performance of human listeners

ac\cl C YFAFSFYMAMSM

C 83 88

YF

55 20 15 5 5

AF

10 30 35 5 20

SF

25 4 8 33 821

YM

551030 545

AM

16 5 5 47 26

SM

28 6 6 17 44

Table 4. Relative confusion matrix of the best GMM-UBM system

(see text) on the SpeechDat II corpus; the columns contain the actual

age (ac) and the rows contain the classified age (cl) (overall precision

49%)

not significant (p>0.1). Note that the F-measur e [12] of the

SVM-system leads to higher values than the F-measure calculated

on the results of the human listeners (with weighs of 0.5, 1 and 2).

To compare the robustness of the two approaches against data from

different domains and channels, we used the already trained GMMs

(or SVMs respectively) and tested on the VoiceClass database. The

robustness of both of our systems seems to be good. The differences

of the 4 approaches are negligible.

6. CONCLUSION

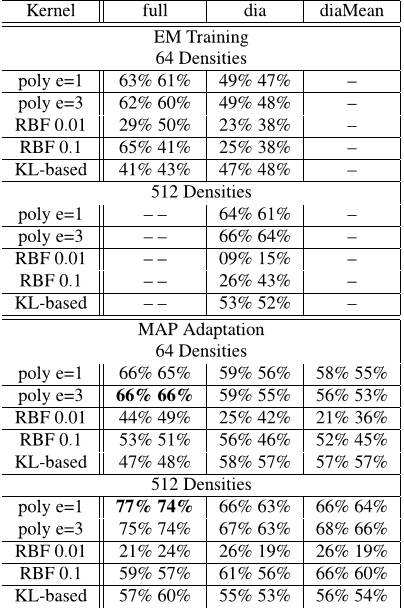

We applied the GMM supervector-based SVM approach to the field

of automatic age recognition in combination with gender recogni-

tion. We compared this approach to the GMM-UBM approach,

ac\cl

C YFAFSFYMAMSM

C 66 33

YF

5 75 20

AF

75 25

SF

42075

YM

85 15

AM

15 78 5

SM

552761

Table 5. Relativ e confusion matrix of the best GMM supervector-

based SVM system (see text) on the SpeechDat II corpus; the

columns contain the actual age (ac) and the rows contain the clas-

sified age (cl) (overall precision 77%)

which is state-of-the art for the task of text-independent speaker

identification, and to the PPR system of [4]. We only investigated

spectral features. The SVM systems outperformed all of these ap-

proaches for the same domain corpus. Compared to the best system

of [4] (PPR) we improved the accuracy by 43% and the recall by

35% (significance: p<0.001).

7. REFERENCES

[1] R. Cowie, E. Douglas-Cowie, N. Tsapatsoulis, G. Votsis,

S. Kollias, W. Fellenz, and J. Taylor, “Emotion Recognition in

Human-Computer Interaction,” IEEE Signal Processing Mag-

azine, vol. 18, no. 1, pp. 32–80, 2001.

[2] N. Minematsu, K. Yamauchi, and K. Hirose, “Automatic esti-

mation of perceptual age using speaker modeling techniques,”

in Proceedings Interspeech 2003, Geneva, Switzerland, 2003,

pp. 3005 – 3008.

[3] C. M¨uller, F. Wittig, and J. Baus, “Exploiting Speech for Rec-

ognizing Elderly Users to Respond to their Special Needs,” in

Pr oceedings Interspeech 2003, Genev a, Switzerland, 2003, pp.

1305 – 1308.

[4] F. M etze, J. Ajmera, R. Englert, U. Bub, F. Burkhardt,

J. Stegmann, C. M¨uller, R. Huber, B. Andrassy, J.G. Bauer,

and B. Littel, “Comparison of Four Approaches to Age and

Gender Recognition for Telephone Applications,” in ICASSP

2007 Pr oceedings, IEEE International Conference on Acous-

tics, Speech and Signal Processing, Honolulu, Hawai’i, USA,

2007, vol. 4, pp. 1089 – 1092.

[5] Douglas A. Reynolds, Thomas F. Quatieri, and Robert B.

Dunn, “Speaker Verification using Adapted Gaussian Mixture

Models,” Digital Signal Processing, pp. 19–41, 2000.

[6] Douglas A. Reynolds, “An Overview of Automatic Speaker

Recognition Technology,” in ICASSP 2002 Proceedings, IEEE

International Confer ence on Acoustics, Speech, and Signal

Processing, Orlanda, Florida, USA, 2002, vol. 4, pp. 4072–

4075.

[7] W. M. Campbell, D. E. Sturim, and D. A. Reynolds, “Support

Vector Machines Using GMM Supervectors for Speaker Verifi-

cation,” Signal Pr ocessing Letters, IEEE, vol. 13, pp. 308–311,

2006.

[8] A. Dempster, N. Laird, and D. Rubin, “Maximum Likelihood

from Incomplete Data via the EM Algorithm,” Journal of the

Royal Statistical Society, Series B (Methodological), vol. 39,

no. 1, pp. 1–38, 1977.

[9] J.L. Gauvain and C.H. Lee, “Maximum A-Posteriori Es-

timation for Multivariate Gaussian Mixture Observations of

Markov Chains,” IEEE Transactions on Speech and Audio Pro-

cessing, vol. 2, pp. 291–298, 1994.

[10] C. J. C. Burges, “A Tutorial on Support Vector Machines for

Pattern Recognition,” Data Mining and Knowledge Discovery,

vol. 2, no. 2, pp. 121–167, 1998.

[11] R. Dehak, N. Dehak, P. Kenny, and P. Dumouchel, “Lin-

ear and Non Linear Kernel GMM Support Vector Machines

for Speaker Verification,” in Proceedings Interspeech 2007,

Antwerp, Belgium, 2007.

[12] C. J. van Rijsbergen, INFORMATION RETRIEVAL, Butter-

worths, London, 2nd edition, 1979.

![Table 1. Precision and recall on the SpeechDat II corpus with different training algorithms (EM-f ↔ EM with full covariance matrices; EM-d ↔ EM with diagonal covariance matrices; MAP-f ↔ MAP with full covariance matrices; MAP-d↔MAP with diagonal covariance matrix; MAP-dM ↔ MAP with diagonal covariance matrices [only means are adapted])](/figures/table-1-precision-and-recall-on-the-speechdat-ii-corpus-with-2kduuj5s.png)

![Table 3. Overall precision and recall for the best two systems of [4] (GMM and parallel phone recognizer [PPR]) and of our two systems; tested on the two different corpora; the last row shows the performance of human listeners](/figures/table-3-overall-precision-and-recall-for-the-best-two-35pdc56l.png)